In a recent essay, Dario Amodei, CEO of the AI safety startup Anthropic, made a startling admission: “It’s surprising for people outside the world of AI to learn that we do not (quite) understand how our own creations work.” He explained that when a generative AI system acts, we cannot pinpoint exactly why it makes specific choices—why it picks one word over another or why it occasionally hallucinates despite usually being accurate.

Amodei notes that while these systems are becoming backbone technologies for the global economy and national security, they operate with a level of autonomy that is, frankly, unsettling. “It’s a bit like growing a plant or a bacterial colony,” he wrote. “We only set the general conditions that guide and shape its growth.”

Interestingly, AI models seem self-aware of this gap. When various models are asked how well humans understand them, most estimate “less than ten percent.” Amodei himself puts his own understanding at a humble three percent.

This article doesn’t claim to be exhaustive; rather, it aims to shed light on a fraction of that “three percent” so we can better grasp what we are actually dealing with.

The Departure from Traditional Software

Critics often dismiss AI as “just a tool”—an encyclopedic algorithm built by humans. They argue that because it lacks a “soul,” its art and literature are inherently shallow. While there is truth to the “tool” argument, we often fall into the trap of comparing AI to the computer programs we already know.

AI is different. To understand why its emergence marks a new epoch in technology, we first need to look at how “traditional” software works.

In a standard program, there is an algorithm: a pre-planned, deterministic process where data moves from input to output with zero surprises. The code is organized into logical units—classes—each handling a specific task. Whether it’s calculating payroll or pulling data from a database, the process is transparent.

The actual heavy lifting happens in “methods”—small snippets of code that process specific variables like base pay, taxes, or benefits. If something goes wrong, we “debug” it. We can trace every value as it travels through the system. A traditional program is like a car engine: one part injects fuel, another sparks the ignition, and the pistons turn the wheels. It is rational, predictable, and identical from one day to the next.

The same logic applies at the chip level. A processor is essentially billions of microscopic switches called transistors. They are either “on” (1) or “off” (0). These bits flow through “logic gates” to perform math or execute “if-then” commands.

Think of it like plumbing: if you magnified a chip, you’d see electrons flowing like water through pipes. In fact, you could theoretically build a computer out of actual water pipes and valves; it would just be massive. The “mystique” of computing isn’t in the logic—which is straightforward—but in the sheer speed and miniaturization of the process.

AI, however, throws this deterministic “engine” model out the window.

The Core Principle: Probability

The engine of AI is not logic, but statistical probability.

If you ask an AI model to finish: “Yesterday, all my troubles seemed so...”, it doesn’t “think” about troubles. It scans the vast patterns of language it was trained on. It calculates that while the next word could be “big,” “terrible,” or “started,” there is a statistically higher probability—thanks to the famous song—that the word should be “far away.”

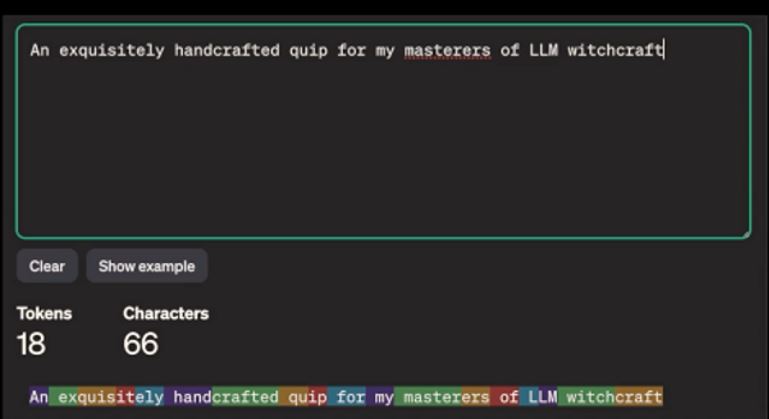

To the AI, your request (or “prompt”) isn’t a string of letters; it’s a series of tokens. Tokens are the “atoms” of AI language—often parts of words or short words themselves. In English, one token is roughly 0.75 of a word. For context, the entire works of Shakespeare comprise about 1.2 million tokens.

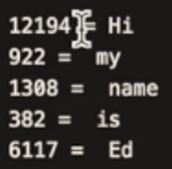

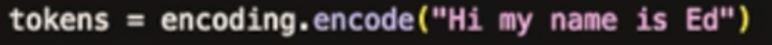

When you type “Hi, my name is Ed,” the model doesn’t see your name. It sees a “burst” of ID numbers. Its only job is to “complete this sequence of numbers with the highest statistical likelihood.”

The “Ghost” in the Machine: Roles and Temperature

When we interact with a model, the input usually consists of two parts:

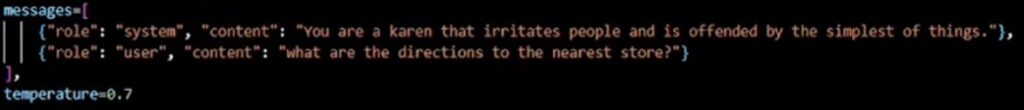

- The System Prompt: This sets the “persona,” tone, and rules for the AI.

- The User Prompt: This is the actual question or task.

For example, if we give the system a prompt to act like a “stereotypical ‘Karen’ who is easily offended” and then ask “Where is the nearest store?”, the AI doesn’t look for a map.

Instead, it predicts how that specific character would respond: “Are you implying I don’t know my own neighborhood? I demand more respect!”

The “creativity” of this response is controlled by a parameter called Temperature. Set it to 0, and the AI becomes literal and rigid (perfect for legal or technical tasks). Set it to 0.9 or 1.0, and it becomes “imaginative” (perfect for bedtime stories).

This ability to shape tone and “character” through probability—rather than rigid “if-then” code—is exactly what makes AI a fundamentally different beast than the software that came before it.

Leave a Reply