We asked Google’s small model, Gemma, the following prompt: “Tell a ‘light’ joke for a room full of Data Scientists,” and it responded:

“Why did the Data Scientist break up with his model?… Because it predicted his every move.”

Another time, the joke was:

“Why did the scarecrow get an award?… Because it was outstanding in its field.”

Here we can see the model playing with the double meanings of the words and phrases “model” (fashion model), “predicting a move” (or step), and “outstanding in its field.”

This raises the question: how does a model understand the different meanings of words that sound the same, or how does it grasp similar meanings of words that sound completely different, such as “dog” and “puppy”?

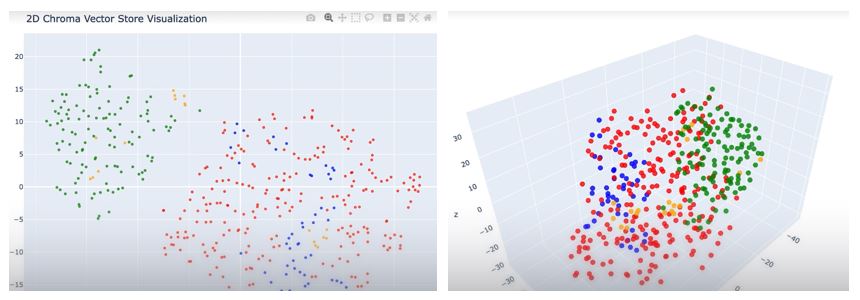

Imagine we dedicated one corner of a room to learning a foreign language. Every time we hear a new word, we tape a string to the ceiling and attach a colored piece of paper at the bottom end with the word written on it. Words with similar meanings or words related to a specific domain are written on the same color paper and grouped close together. This way, we end up with a room full of hanging pieces of paper, as shown in the image below.

The model does something similar. It converts every word into a set of numbers representing its spatial coordinates and groups them based on semantic similarity. Even though words sound different, it “understands” their semantic similarity—i.e., their meaning—through simple vector addition and subtraction. Based on this principle:

king – man + woman = queen

dog – big + young = puppy

Paris – France + England = London

This is actually an example of an older technology (Word2Vec) from about 10 years ago. Modern models (GPT, Claude, Llama) use much more complex mechanisms (the “Attention mechanism” and contextual vectors) where a word doesn’t have a single, fixed set of numbers; instead, its “meaning” depends on the context of the sentence. However, this remains a good, intuitively understandable example for explaining the underlying principle.

In short, the model doesn’t “understand” anything in the way we do; it merely calculates and uses the results.

Even if we mention something from the beginning of a conversation with a chatbot, we might get the impression that it “remembers” our chat. However, that is not the case—with every new prompt, the entire conversation from the beginning to the end is sent to the model. This is known as the “context window.” This is also why model providers charge for both input and output text, as computational resources are consumed in both directions.

The following image shows the context window capacities—that is, the total number of tokens a model can handle in a single conversation when all questions and answers are combined—along with the prices per million input and output tokens.

When a company builds an AI agent, for example, it selects a model based on the model’s capabilities and its price. The AI agent is then connected to a remote model via a so-called API and uses its “thinking” services. The final cost is calculated based on the number of tokens sent and received.

Vectors

Computers cannot understand words or images; they only understand numbers. Therefore, human concepts—such as a sentence or an image—are translated into a list of numbers, a vector, so that the computer can process them.

A vector is simply a list of numbers (e.g., [0.1, -0.5, 0.8, 0.0]).

An embedding is a specific type of vector created by a machine learning model to represent meaning (semantics). For general purposes, we can just use the word “vector.”

A key characteristic is semantic proximity. If you convert the sentences “dog bites” and “puppy barks” into vectors, they will be mathematically very close to each other, even though they share no common keywords.

What is the purpose of a vector database?

Once we convert our data, documents, images, or user profiles into vectors, we end up with millions of lists of numbers. A traditional database like SQL is poorly suited for searching through this kind of data, which is why special vector databases exist. Their primary use is:

Semantic Search (searching by meaning)

Traditional search works using keywords. If you search the internet for “how to replace a sink trap?”, a keyword search looks for those exact words. A vector database search, on the other hand, looks for meaning. It might return a document titled “Home Plumbing Guide,” even if the exact words you searched for aren’t in the title, because the vectors are mathematically close.

Leave a Reply